Personal Project · 2026

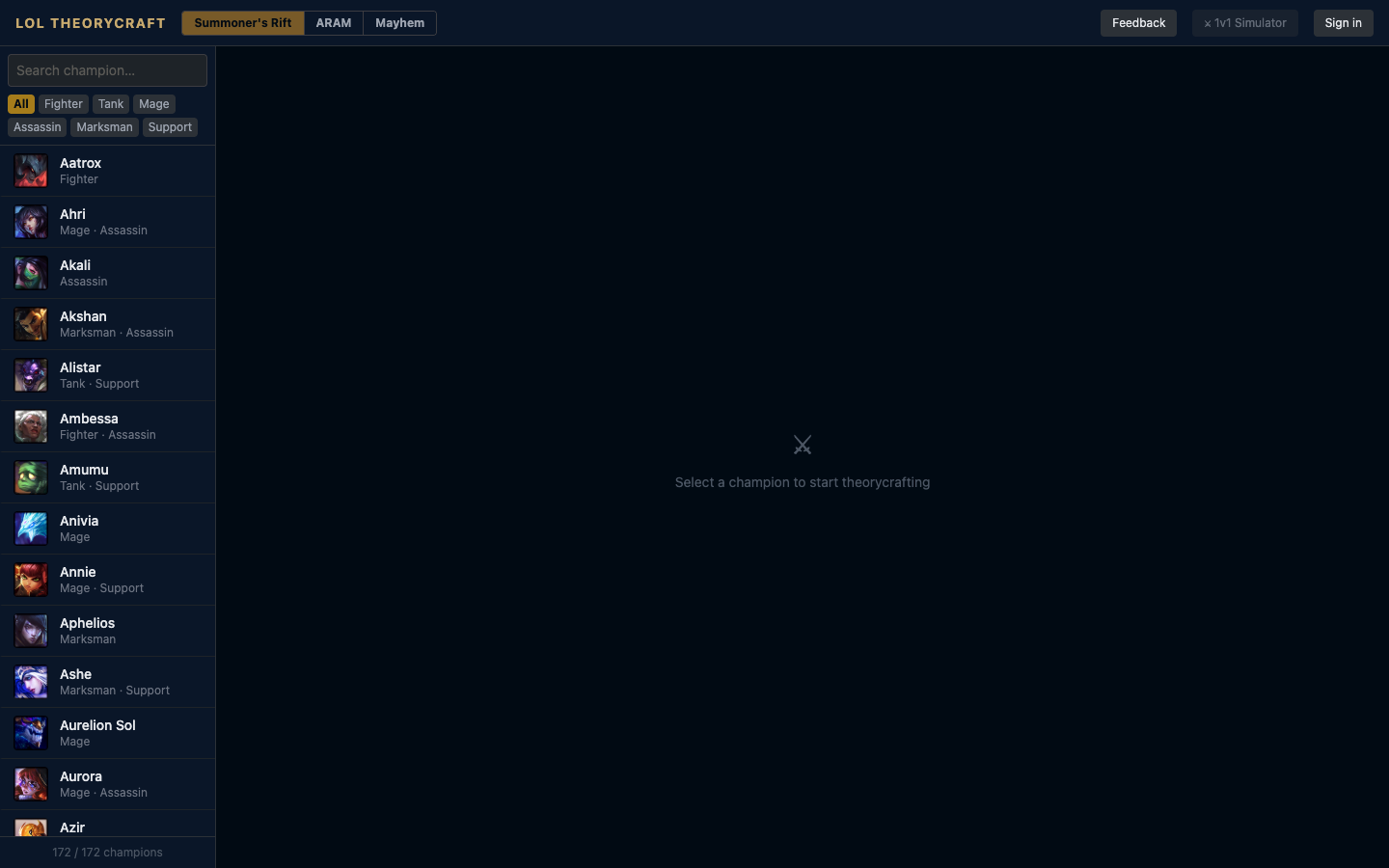

LoL Theorycraft

I play League. I noticed a gap. So I built the tool I wanted to exist.

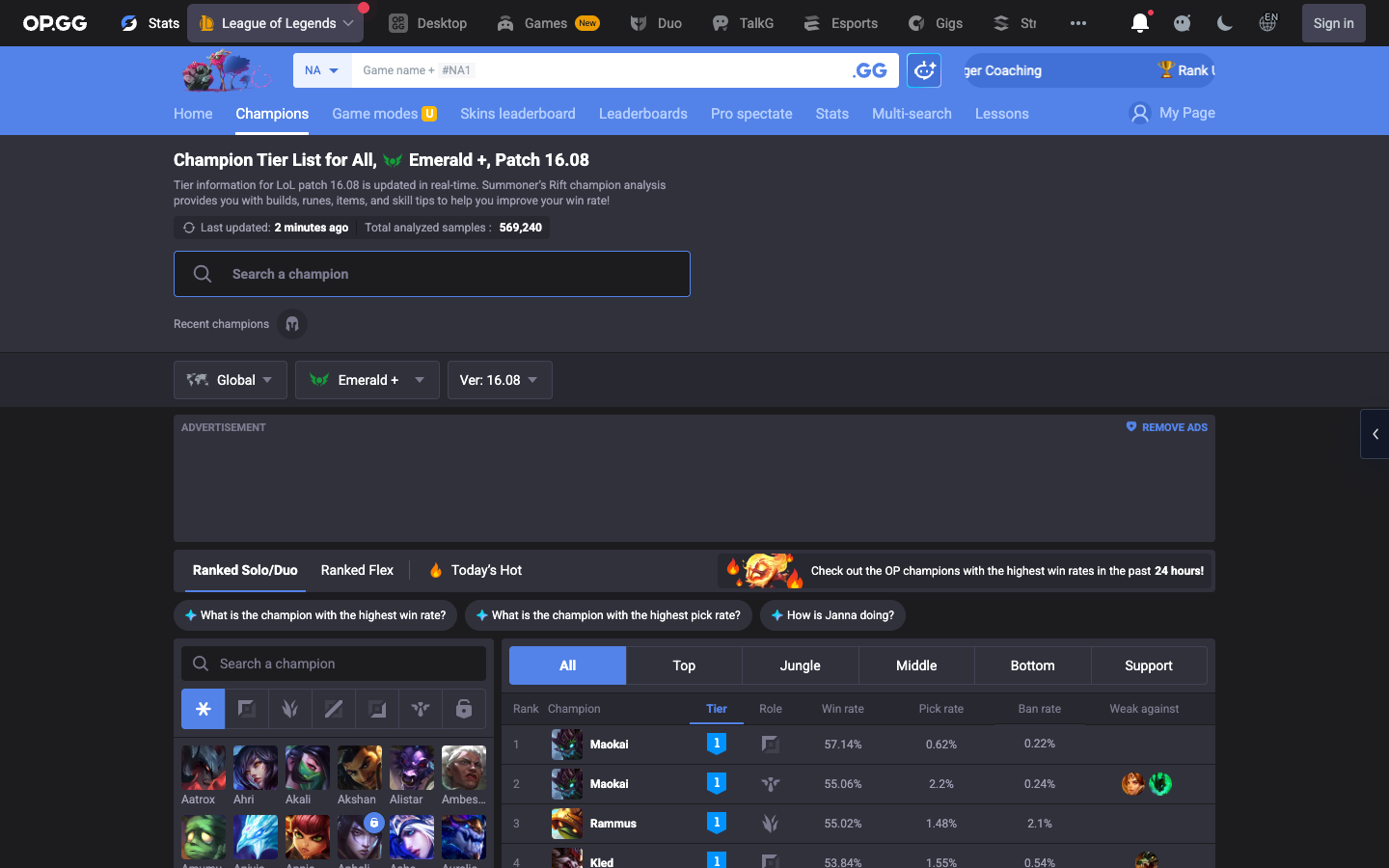

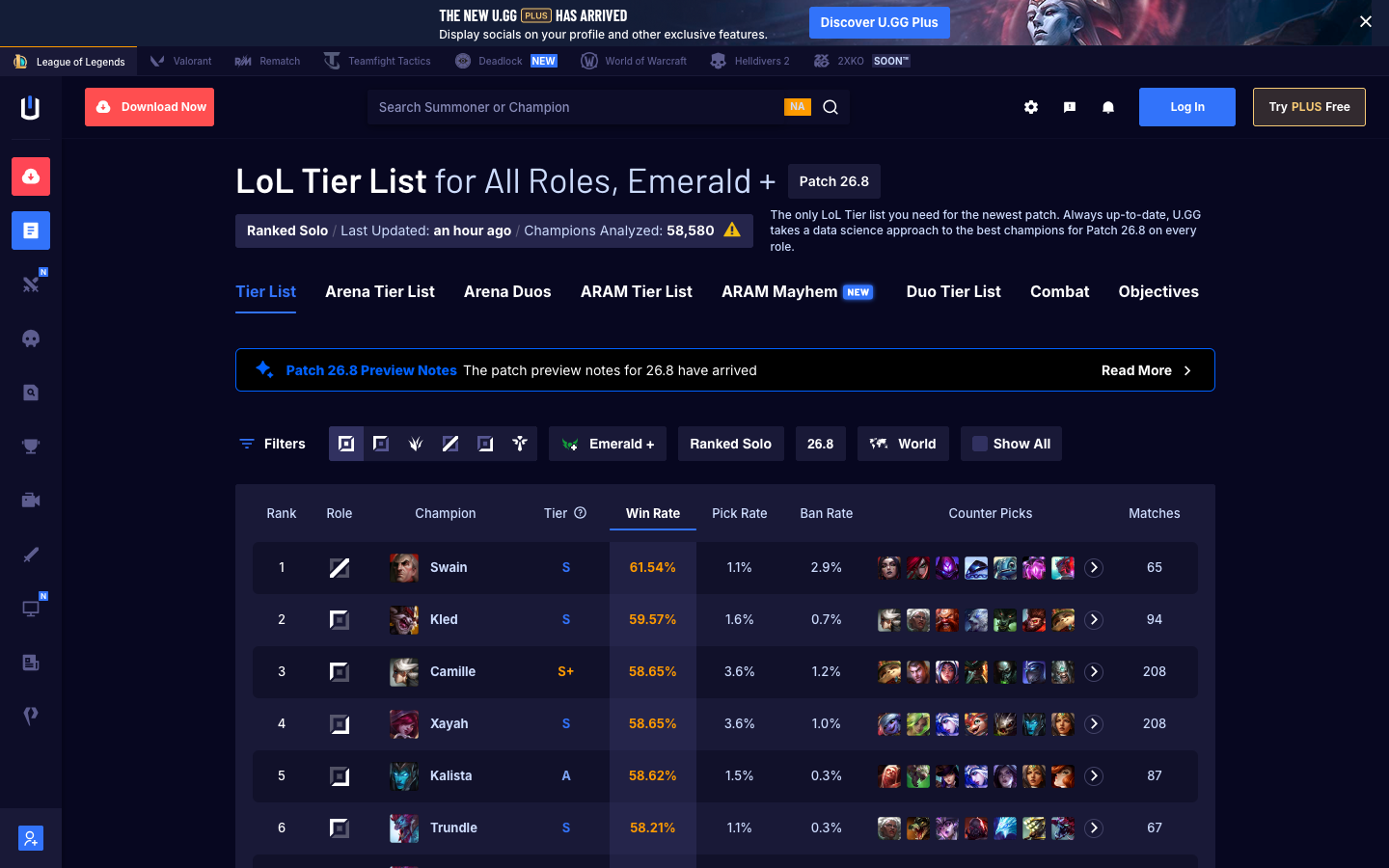

Most League of Legends resources tell you what to build — copy the top-ranked player's loadout, follow the tier list, trust the algorithm. Tools like u.gg and op.gg are powerful, but they're built around one outcome: conformity to the current meta. They answer "what are pros building right now?" — not "why does this item work on this champion?"

I wanted something different. A tool that teaches the reasoning, not just the answer. One that shows a new player why a Sheen item spikes hard at level 6, or helps an experienced player stress-test a rune page against real damage math — without needing to find a specific streamer or parse a Reddit thread to get there.

The tools that exist tell you what to build. None of them explain why.

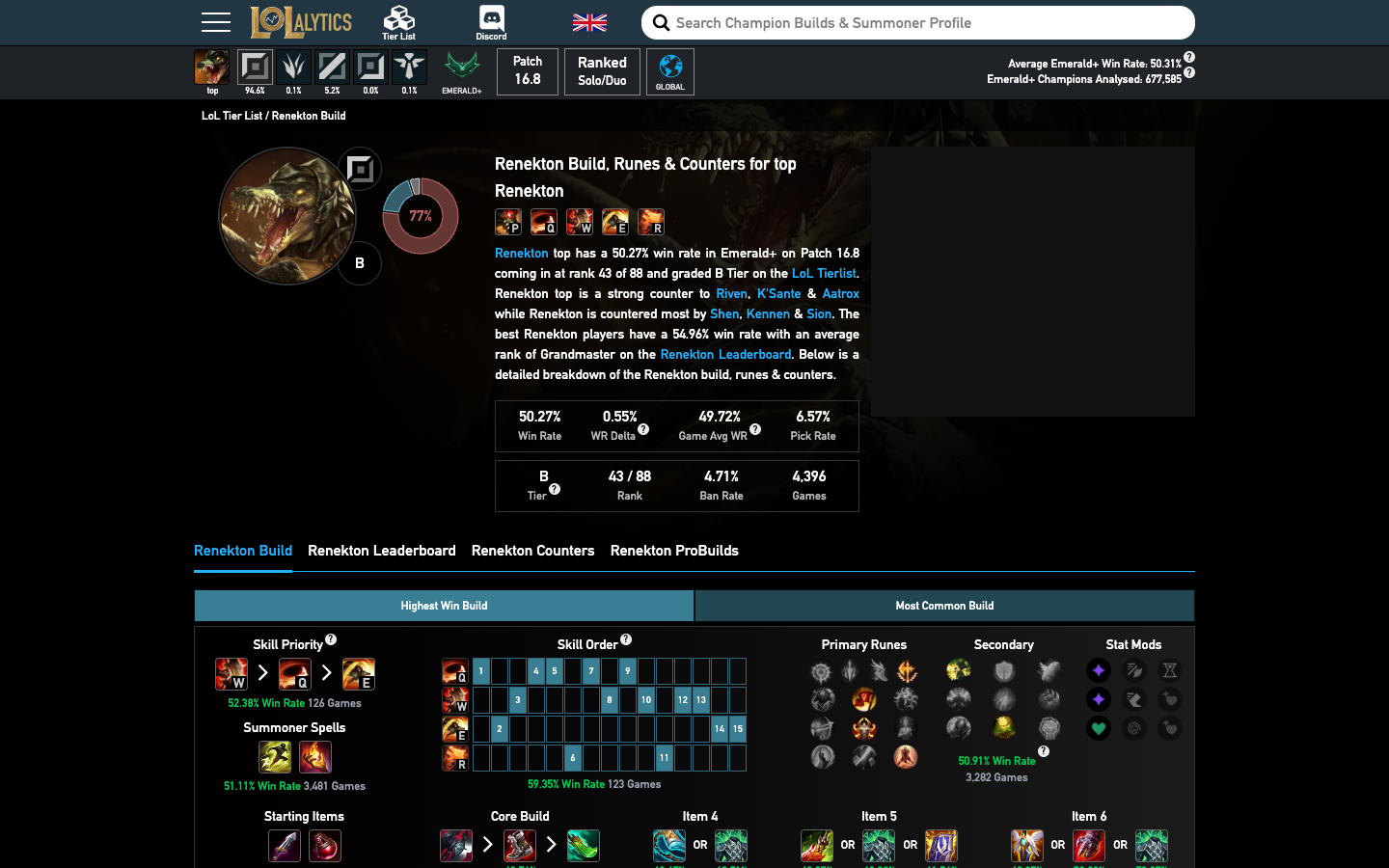

Before building anything, I spent time using the three dominant tools in the space — op.gg, u.gg, and Lolalytics — as both a player and a product designer. Each one is well-executed at what it does. And each one has the exact same blind spot.

All three tools are built on the same model: aggregate millions of games, find what's winning, surface it to the player. That's genuinely useful if your goal is to play the meta. But it creates a passive relationship with the game — you're executing someone else's playbook, not building your own understanding.

None of them answer: why does this item work on this champion specifically? What does my damage curve look like at level 9 vs. level 13? If I swap one rune, how does it change my all-in at 60% health? The math exists. It's in the game. No tool was surfacing it interactively.

The Gap

Every major LoL tool is optimized for the meta-follower. Nobody was building for the tinkerer — the player who wants to understand the game deeply enough to make their own calls.

Two underserved communities. The same unmet need.

Playing ARAM Mayhems and Draft Pick with my regular group, I kept seeing the same pattern surface at both ends of the skill spectrum.

New Players

They had no way to understand what their items actually did — not in the tooltip sense, but mechanically. What does 20 ability haste mean for my cooldown in a real fight? Does this item spike early or scale late? Why does everyone say Rabadon's Deathcap is a "buy it last" item? The info is buried in wiki pages and Reddit threads that assume you already know the vocabulary.

Experienced Players

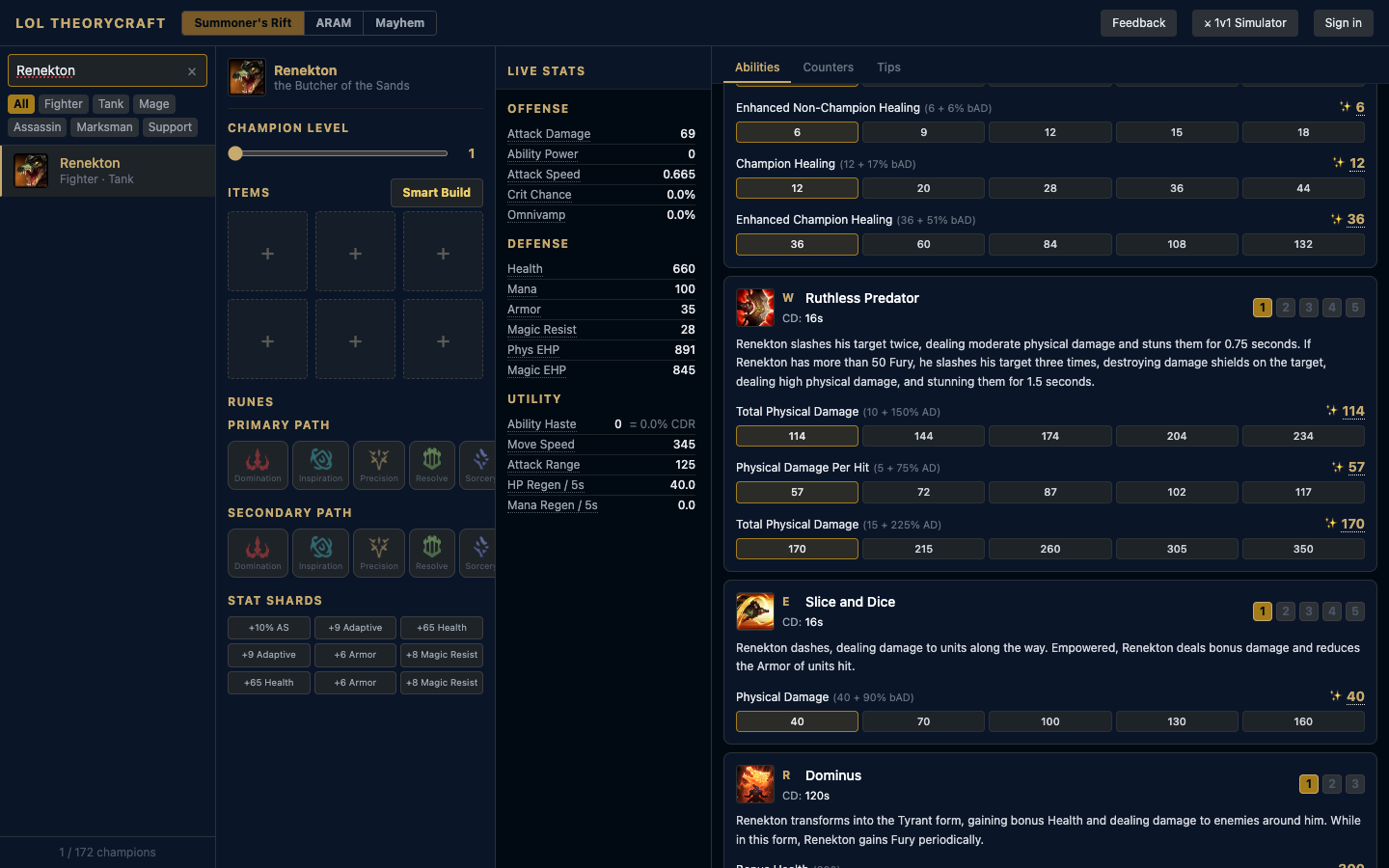

They had no sandbox for off-meta ideas. If you want to try a non-standard build path on Renekton, you're going in blind — or relying on a guide from a patch cycle ago. There was no way to mathematically verify an intuition before committing it to a real game and your teammates' experience.

Pick a champion. Build your kit. Watch the math update in real time.

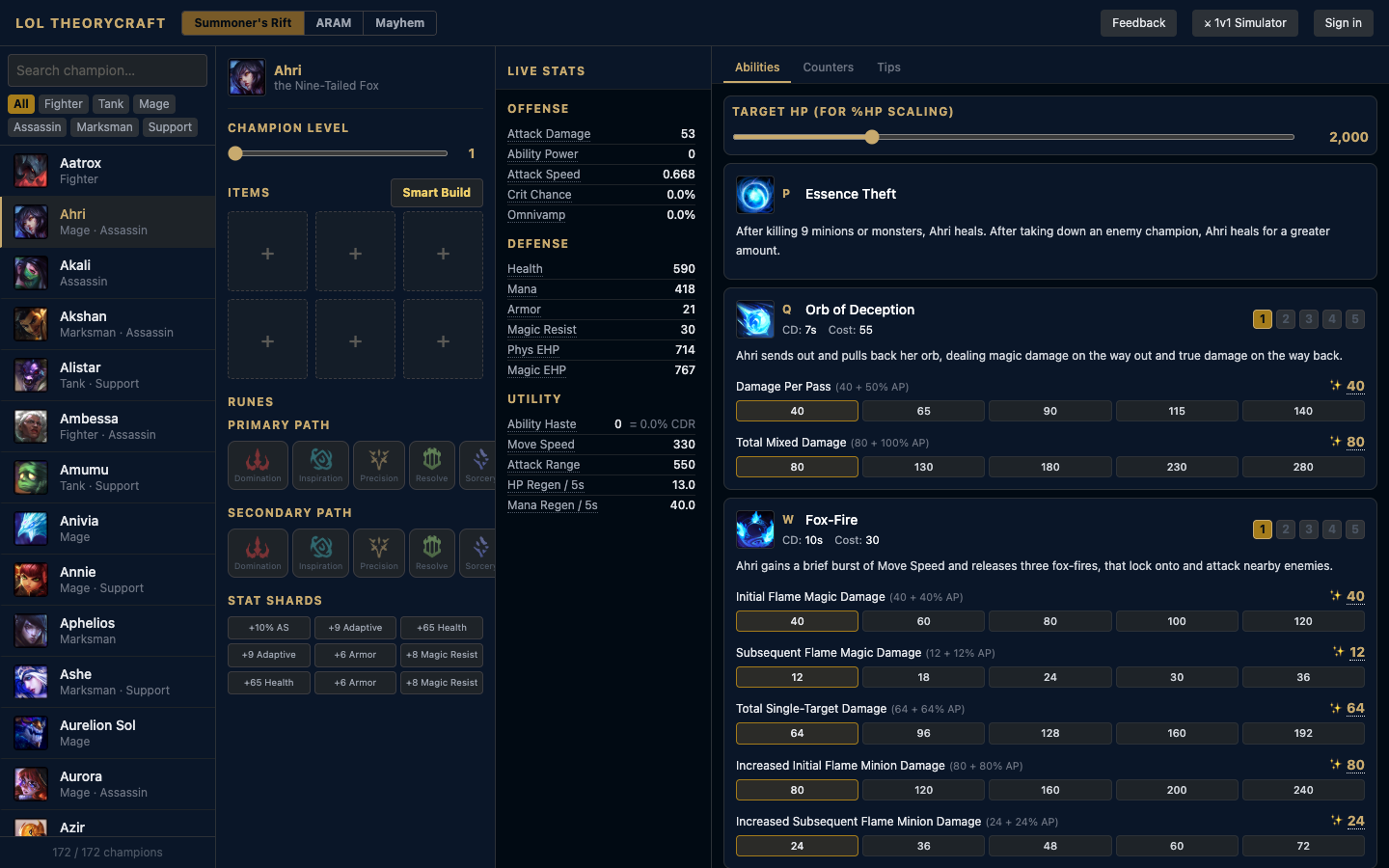

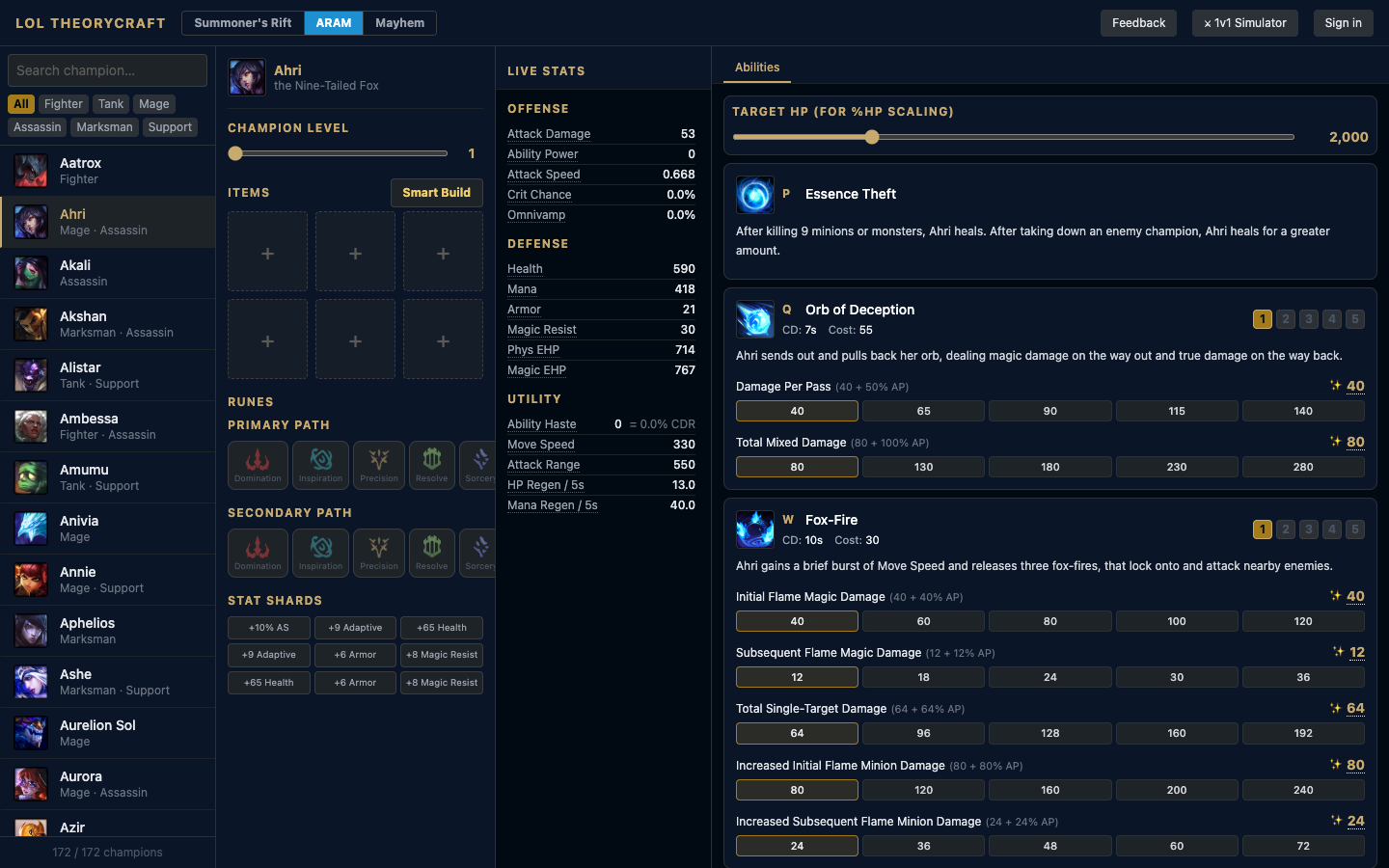

LoL Theorycraft is structured around a single core interaction: select a champion, configure your items, runes, and level — and every stat and ability damage number updates live. No static recommendations. No "trust us, this is best." Just the math, for your exact build, on your exact champion.

The right panel surfaces ability-by-ability damage tables — showing outputs across stat thresholds so you can see the exact breakpoint where an item starts earning its slot. For a champion like Ahri, you can watch Orb of Deception's damage per pass climb from 80 at 40 AP to 142 at 140 AP. For Renekton, you see how Ruthless Predator's physical damage scales against armor — and whether it's worth delaying an item for extra AD.

The left panel handles build configuration: items, runes (primary and secondary path), stat shards, and champion level. The Live Stats column updates every stat — offense, defense, utility — so you always know your full picture. Smart Build auto-populates a recommended starting point, but every slot is editable.

Vibe coding a complex domain — where being the user made all the difference.

I built LoL Theorycraft entirely with Claude Code, iterating in tight loops: describe the feature, review the output, test it as a player, identify what was wrong, refine the prompt, repeat. The same workflow I used building Squads — but with a key difference. Here, I was building for a domain I know deeply.

"When you're the user of the thing you're building, vague prompts disappear. Every feature request comes with a success criteria you've already experienced."

That domain fluency changed the quality of what I could build. When Claude generated a damage formula that was off — technically valid as code but not matching how in-game scaling actually works — I caught it immediately, not because I audited the math abstractly, but because I'd seen it behave differently in real matches. Domain knowledge isn't just useful for ideation. It's quality control.

The hardest data modeling challenge was the stat system: champion base stats, per-level growth rates, item stat contributions, rune additive vs. multiplicative bonuses, ability ratios, and the interaction order between all of them. Getting this right required thinking like both a designer (how does a player experience this information?) and an engineer (in what order do these values resolve?). Vibe coding through that problem forced me to hold both frames simultaneously.

What Iterating Taught Me

The first version only showed flat stats. No ability math. Players could see their AD and AP but couldn't see what those numbers meant in practice. That gap was obvious the moment I tested it as a player — and adding the ability damage panel became the most important feature in the tool. You don't know what's missing until you use it.

Distributed through real games. Tested with real players.

The most natural distribution channel was the community I already had: players I queue with in ARAM Mayhems and Draft Pick sessions. I started sharing the tool between queues — in the lobby after a game, when someone was asking why a build felt off, or when a new player was trying to figure out what to build on a champion they'd never played.

Why In-Game Distribution Works

The feedback loop is unusually tight. A player theories a build, queues up, plays a real game, then comes back with a lived result to compare against the model. That's a faster test-and-learn cycle than most UX research allows — the context is hot, the question is real, and the tool is right there.

Early testing confirmed the split I'd anticipated. New players wanted more plain-language explanation — they needed "this means your Q cooldown drops from 12s to 9.6s" not just "20 ability haste." Experienced players wanted the raw math up front and didn't need handholding through it.

That tension — simple surface, deep capability — is a familiar design problem. The current approach is progressive disclosure: the tool starts accessible, and depth is always one click away.

Wider community. Deeper math. Matchup theory.

The core experience is working. The next phase is expanding real-player validation — getting the tool in front of more players across different roles, skill levels, and playstyles, and building structured feedback loops into the product itself rather than relying on informal in-game sharing.

"The goal was never to compete with u.gg. It was to give back to a community that taught me how to play — and fill a gap those tools never cared to fill."

The next major feature I want to build is matchup theory — understanding not just how your build performs in isolation, but how it holds up against specific enemy compositions. The math for that exists in the game. It just needs to be surfaced in a way that's as interactive and readable as the rest of the tool.

Next Project

Capital One · 2025

Solution Finder & Presentment

Doubled CTR on a hardship flow.